This is where the article content will be displayed. The rich text field from the CMS collection will be bound here to show the full article body with formatted text, images, and other rich content.

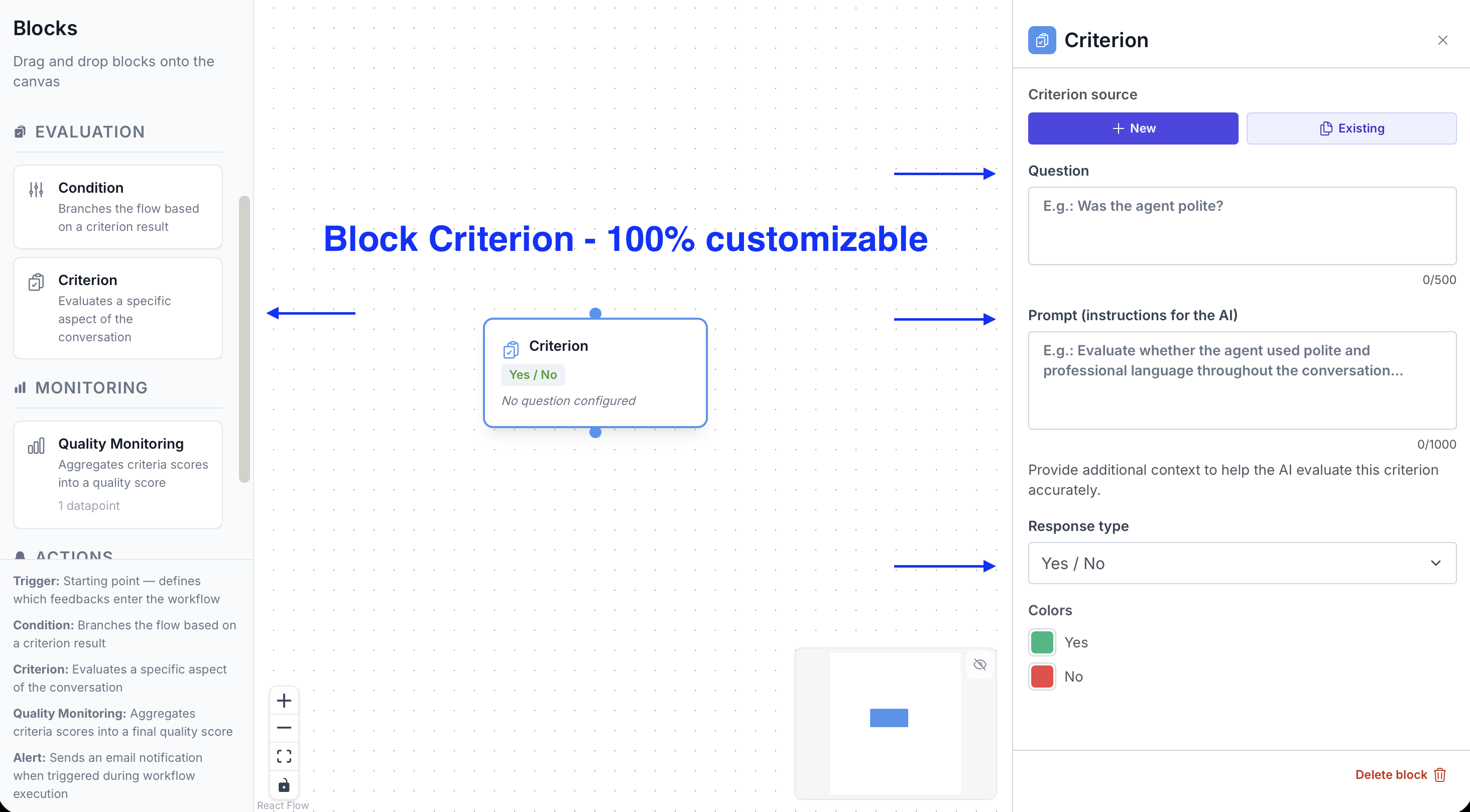

A Criterion evaluates a specific dimension of the conversation: agent response quality, issue detection, procedure compliance, customer satisfaction… You can add as many as needed in a single flow.

Give a clear name that reflects what the Criterion evaluates. This name is visible in the dashboard results and in Alert variables.

Write the question or instruction you're submitting to the AI. The more precise the prompt, the more reliable the response.

Examples:

Choose how the AI scores the conversation:

Criteria are the evaluation blocks of the flow. Each Criterion asks the AI a question about the content of a conversation and generates a score.

See how Gravite transforms your quality management in real time.